PocketLLM

Your AI runs from a USB stick. Plug in. Chat. Unplug. Gone.

2 followers

Your AI runs from a USB stick. Plug in. Chat. Unplug. Gone.

2 followers

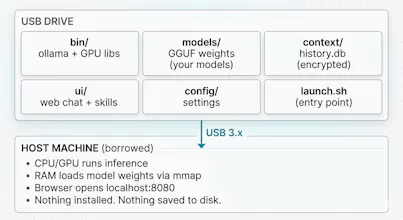

PocketLLM bundles an LLM runtime, model weights, and chat UI on a USB drive. Plug it into any Mac or Linux machine, run one command, and get a local AI no install, no cloud, no trace. Unplug and nothing remains on the host.

Hey Product Hunt! I'm Vraj, a new grad CS student, and I built PocketLLM because I was frustrated with one thing: every time I set up a local LLM, it ate 5–10GB of my SSD — and I had to redo it on every machine.

So I asked: what if the AI just lived on a USB stick?

PocketLLM bundles everything on a single drive. Plug it in, run one command, and you have a local AI chatbot. No install. No cloud. Unplug and nothing remains on the host machine.

The part I'm most proud of: I benchmarked USB vs SSD and found that after the initial model load, inference speed is identical 54 tokens/sec on both. The USB's only penalty is a ~30 second cold start on first chat. After that, it's all RAM.

It's fully open source, works with any Ollama model, and runs on macOS and Linux. I'd love to hear what you think and if you try it, let me know what models you run on it!

GitHub: https://github.com/vraj00222/poc...

website: https://pocketllm-site.vercel.app/