Launching today

Perpendo

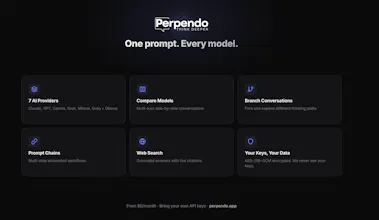

One prompt, every AI model. Think deeper.

2 followers

One prompt, every AI model. Think deeper.

2 followers

Perpendo connects Claude, GPT, Gemini, Grok, Mistral and Groq in one interface. Bring your API keys, pay only for what you use. Compare models side by side with parallel streaming. Branch any conversation to explore different paths. Build multi-step prompt chains. Search the web with cited sources. Track costs across all models. API keys encrypted with AES-256-GCM. We never see your keys or read conversations. Free to start. Plus $5/mo. Pro $9/mo. Self-hosted $49/one-time with Ollama support.

Free Options

Launch Team / Built With

Thanks for checking out Perpendo! Happy to answer any questions.

Why I built this: I use AI constantly for my doctoral research, coaching practice, and business planning. But I was frustrated juggling Claude, ChatGPT, and Gemini in separate tabs -- paying $20/month to each, losing conversations, and never being able to compare their answers. I wanted one place to think with all of them. Nothing existed that did it well enough, so I built it.

A few things I'm especially proud of:

Conversation branching: Fork any conversation at any point, explore a different path, and navigate between branches with a visual tree. This changes how you think with AI.

Continuous model comparison: Send the same prompt to multiple models, then keep the conversation going. Watch how Claude and GPT diverge over multiple turns on the same topic. Nobody else does multi-turn comparison.

Built by a non-engineer: I'm a rehabilitation counselor. The entire platform was built using Claude Code. If that doesn't convince you AI is changing who can build software, I don't know what will.

Self-hosted option: Download, unzip, double-click. No Docker, no terminal. Connect Ollama for fully private, zero-cost local AI.

Use code PRODUCTHUNT for 50% off (forever!) during our public beta (expires April 18).

Happy to answer anything about the product or what led me to build it.

Curious -- for those of you using multiple AI models, what's your current workflow? Do you keep separate tabs open, or have you found a better way?