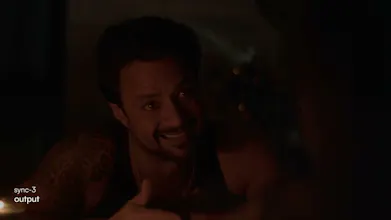

sync-3

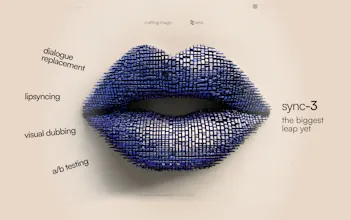

Studio-grade AI lip sync and visual dubbing

264 followers

Studio-grade AI lip sync and visual dubbing

264 followers

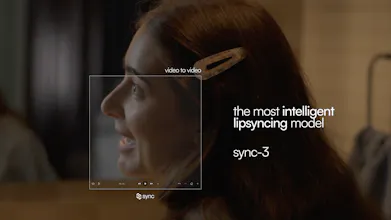

sync-3 is a 16B parameter AI lip sync model that doesn't just move lips, it understands performances. Built on a global understanding of a person across an entire shot, it generates all frames at once instead of stitching isolated snippets. It handles what breaks every other model: close-ups, occlusions, extreme angles, low lighting - all while preserving the emotion of the original performance across 95+ languages in full 4K. Try it out at sync.so, via API, or in Adobe Premiere.

sync-3

Hey Product Hunt! Kalyan here, head of content and marketing at sync.

We've been building AI lipsync for a while now, and today we're launching sync-3, our most advanced model release ever.

Here's the short version: previous lipsync models (including our own) processed video in small, isolated chunks and stitched them together. sync-3 takes a fundamentally different approach. It builds a global understanding of a person across an entire shot and generates all frames at once. The result is consistency and realism that closes the gap between real footage and dubbed footage.

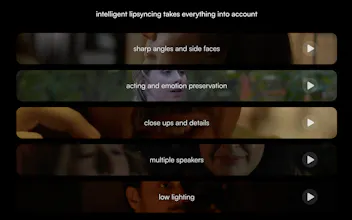

A few things sync-3 handles that nothing else does well:

- Close-ups and partial faces (the full face doesn't need to be visible)

- Extreme angles including side profiles, over-the-shoulder, non-frontal

- Obstructions like hands, mics, scarves - detected and handled automatically

- Speaker style and emotion are preserved, not flattened

- Low lighting and varied lighting scenarios

It's 32x larger than our previous model (16B vs 400M parameters), supports 95+ languages, and outputs in 4K.

You can use it right now at sync.so, through our Adobe Premiere plugin, or via API.

We think of this as the leap from perfecting lip sync to unlocking facial reanimation, the model doesn't just match mouths, it understands performances.

Would love for you to try it and let us know what you think. We're here all day answering questions.

How are you handling edge cases where emotion and lip movement don’t quite align across languages, especially with big differences in sentence structure?

sync-3

@becky_gaskell great question!

sync-3 regenerates the entire facial region to match the new language, instead of just retiming lips on top of the original video.

so when sentence structure differs, it can adjust timing, articulation, and expression together rather than forcing a rigid alignment.

that’s what keeps the performance feeling natural, even across languages with very different rhythms.

@kalyan_mada That’s really interesting, regenerating the full facial region makes a lot of sense. How do you handle identity consistency across longer clips or multiple shots so the person still feels like the same individual?

sync-3

@becky_gaskell Our model was built at it's core to understand the identity of the individual being synced so we are able to maintain that identity consistently across the clip.

@kalyan_mada That’s impressive. How does it handle more challenging cases like occlusions or changes in lighting where identity can be harder to maintain?

Are there any issues with smaller languages? For example, Danish? Usually there’s enough training data for popular languages, but not so much for smaller ones.

sync-3

sync-3

Hey Product Hunt!

Super happy with the launch, sync-3 is much more powerful than any previous models we've released, my favorite feature is how you can upload a video with the lips closed and have that be lipsynced without issue and with the highest of quality.

We want you to be able to try it so if you sign up with code SYNC3LAUNCH, you get a free month on the Creator plan and $25 in credits.

Can't wait to see what you create!