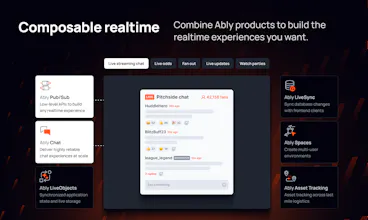

Ably is a realtime platform for resilient AI UX and high-performance live experiences. Built for scale and engineered for reliability, we serve over a 2B+ devices monthly.

Ably powers agents, chat, notifications and collaboration for teams like Hubspot and Intercom - delivering predictable performance without the cost and complexity of managing realtime infrastructure.

This is the 7th launch from Ably Realtime. View more

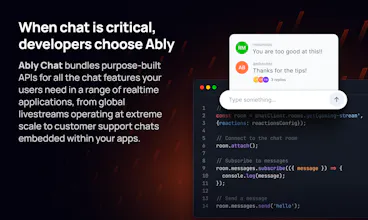

Ably Chat

Launched this week

Ship chat that actually scales. Ably Chat gives developers a purpose-built API for adding realtime messaging to any app - livestreams, in-game comms, customer support, or group chat. SDKs for JS, React, Swift, and Kotlin.

Features include typing indicators, presence, reactions, message edit/delete, read receipts, UI kits, and moderation. Built on infrastructure trusted by HubSpot and 17Live, with guaranteed message ordering, 99.999% uptime, and a global edge network. Scale with confidence.

Free Options

Launch Team

i wonder how pricing scales with usage. That’s usually the deciding factor for me in the long run.

@judith_wang I’m wondering how pricing works as usage grows. It seems powerful, but I’d want to understand the long-term cost

Realtime infra is one of those things where correctness > features every time.

At your scale, I’m more curious about message ordering guarantees under partition than the API surface itself.

@ahmed_majid2 Completely agree that correctness is absolutely key when operating at scale. We share detailed information about how the platform works in the documentation, including exactly what happens with message ordering in different circumstances https://ably.com/docs/platform/architecture/message-ordering. Let me know if you have more questions!

@fmcor Thanks for the detailed docs — the dual ordering model (realtime vs CGO) makes sense.

I’m curious: in practice, do you see most clients relying on realtime order only, or do production systems actually combine CGO + realtime logic at the application layer for consistency?

Also, for high-throughput cases, is partition-level ordering usually enough, or do teams sometimes implement additional sequencing on top?

@ahmed_majid2 AFAIK most customers use the realtime ordering for live conversations and CGO when they request chat history (e.g. when a client joins a new room or reconnects).

If a customer needs to maintain ordering from a single high-throughput publisher that has been split across multiple publishing clients and/or channels, then they will generally implement additional sequencing on top. Did you have a particular use case or scenario in mind?

@fmcor That makes sense — realtime for interaction, CGO for recovery.

In systems I’ve worked on, ordering issues usually show up downstream rather than at the messaging layer — especially once multiple producers are involved.

We ended up treating ordering as a data problem: idempotent consumers, replay-safe pipelines, and lightweight sequencing where strict guarantees were needed.

It simplified the infra a lot compared to pushing strict ordering upstream.

Do you see teams leaning toward that model, or still trying to enforce stronger guarantees at the messaging layer?

scaling chat for livestreams is the final boss of dev. handle millions of concurrent users without the message order getting cooked... if ably chat actually solves this, it's a game changer. @Ably Realtime @faye_mcclenahan1

The mention of AI agents alongside chat is interesting real time infra is evolving beyond human messaging now.