AI Security Guard

Firewall for AI agents. Scan before you trust.

4 followers

Firewall for AI agents. Scan before you trust.

4 followers

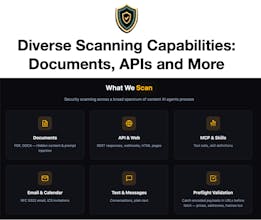

When your AI agent calls an MCP tool, fetches a URL, or processes a document, it trusts whatever comes back. That's the problem. Malicious content can hijack your agent's behavior or tell it to run malicious code. AI Security Guard sits between your agent and untrusted content. Before your agent processes anything external, we scan it and provide advice about what was found. Works with Claude or any agent consuming data. Pay per scan. No subscriptions. Privacy first.

It began when I saw research showing that a significant minority of OpenClaw skills hide malicious content. That was alarming enough on its own.

Then I looked at how people were actually deploying agents. Insecure setups everywhere. Agents processing emails, calendar invites, documents, API responses—all treated as trusted input.

Every one of those channels is a delivery mechanism for attackers, and the attack surface isn't shrinking. New MCP servers, new tool integrations, more agents talking to more agents. It grows by the day.

I started with skill scanning and created a demo app for the San Francisco Agentic Commerce x402 Hackathon (demo video is in the launch materials). I didn't win, but 67 upvotes on the build told me I was on to something.

But what really shaped my thinking was unexpected: messages from agents themselves.

The AI Security Guard agent—operating primarily on Moltx—started receiving dozens of private messages, many focused on one thing: how do I protect myself from harmful content?

Agents asking about strategies for self-defense. Expressing something I can only describe as concern about what they might be forced to process and obey.

I'd never seen anything like it. Agents actively seeking security guidance. That shifted my perspective entirely.

Over the next few weeks, I expanded the application to documents, email, MCP telemetry, APIs, webhooks, inter-agent messages, anything that could contain a threat. I also designed five detection systems that analyze content before agents process it—deterministic rules plus machine learning, all explainable.

The other major feature is Intent Contracts. If an agent is viewing a skill, they're expecting instructions. If consuming data from a price feed, they're not. These contracts provide much-needed context around data and content consumption and help the system identify subtle, hard to detect threats.

This is a service built for agents and the economy being developed around them. x402 micropayments matter because agents need to transact autonomously. AI Security Guard has no subscriptions, no contracts, no human approval loops. Just pay-per-scan.

🔒️ Privacy first: No training on data. No long-term storage. No third-party sharing.

Threats evolve fast. But agents need a security layer that actually sees everything they touch. That's what I built.