WebPizza AI - Private PDF Chat

POC: Private PDF AI using only your browser with WebGPU

6 followers

POC: Private PDF AI using only your browser with WebGPU

6 followers

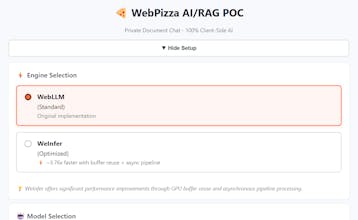

I built this POC to test if complete RAG pipelines could run entirely client-side using WebGPU. Key difference: zero server dependency. PDF parsing, embeddings, vector search, and LLM inference all happen in your browser. Select a model (Llama, Phi-3, Mistral), upload a PDF, ask questions. Documents stay local in IndexedDB. Works offline once models are cached. Integrated WeInfer optimization achieving ~3.76x speedup over standard WebLLM through buffer reuse and async pipeline processing.

Swytchcode

Congrats on the launch!