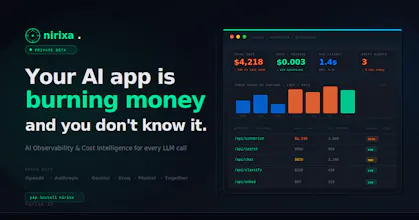

Nirixa AI

AI observability & cost intelligence for LLM apps

33 followers

AI observability & cost intelligence for LLM apps

33 followers

Nirixa gives AI teams full visibility into every LLM call — across OpenAI, Anthropic, Gemini, Groq, and more. Track token cost by feature, detect prompt drift, score hallucination risk, and monitor latency in real time. One SDK. One dashboard. Under 5 minutes to set up.

GitSyncPad

Hey Product Hunt! 👋

We are Aravind & Sai, builder of Nirixa (निरीक्षा — Sanskrit for

"to observe").

I built this after watching a founder friend get a

$4,200 OpenAI bill with zero idea which feature caused it.

They had no way to know. That's the problem Nirixa solves.

What we've built:

→ Token cost breakdown by feature, user & endpoint

→ Prompt drift detection (alerts when quality shifts)

→ Hallucination risk scoring per request

→ Works across OpenAI, Anthropic, Gemini, Groq & more

→ 1 SDK. Under 5 minutes to full visibility.

Launching today with a free tier (100K

tokens/month).

Two things I'd love from this community:

1. Try it → nirixa.in (free tier)

2. Tell me what you'd want to see next

Happy to answer any questions below! 🙏

I run multiple LLM providers in production (Gemini Flash, GPT-4o, GPT-4o-mini) across different parts of my app and tracking cost per feature has been a nightmare. Right now I'm doing it with spreadsheets and napkin math. The token cost breakdown by feature is exactly what I need. Quick question, does it work with OpenRouter or just direct provider APIs?

@jarjarmadeit Yes! OpenRouter works out of the box. It uses the OpenAI-compatible format so Nirixa picks it up automatically, no extra config. Gemini Flash, GPT-4o, GPT-4o-mini all show up separately in one dashboard. 5 min to set up.

cost tracking per feature is the thing I've been missing - I know our total Anthropic bill but have no idea which of our 5 products is eating most of the budget. the hallucination risk scoring is interesting too, curious how it works under the hood - is it a separate LLM call or something more lightweight?

@mykola_kondratiuk This is exactly the problem Nirixa is built for 5 products on one bill with no breakdown is painful.

On hallucination scoring it's fully local, no separate LLM call. Runs inline as a pattern-based scorer inside the SDK itself. Zero added latency, zero extra API cost.

Pattern-based inline - that's actually a smart call. Deterministic, no extra round-trip. Makes sense for an observability SDK where you want zero overhead on the critical path. How does it handle more nuanced cases though, like when the model gives a confident but subtly wrong answer vs. a clearly fabricated one?

@mykola_kondratiuk True pattern-based catches obvious fabrications but subtle confident-wrong is hard without a judge model. That's exactly where we're taking it next optional LLM-as-judge for teams where precision matters more than latency.

Hallucination scoring per call is the feature that stands out here — most observability tools stop at latency and token counts. Do you break down cost attribution at the feature level automatically, or does it require manual tagging of each LLM call in the codebase?

@greythegyutae

It requires one manual tag per call just a feature name when you wrap it:

nirixa.track(feature="/api/chat", fn=lambda: ...)

That's the only change. Everything else like cost, latency, hallucination score, drift is automatic from that point.

The manual tag is intentional. You know your product boundaries better than we do. Nirixa tracks within them precisely.

Hey PH! Sai here — built Nirixa after getting burned by invisible AI costs one too many times.

The core insight: every AI observability tool today is either provider-specific (so it can't show you cross-provider comparisons) or infra-general (so it doesn't understand LLM-specific concepts like prompt drift or hallucination risk).

Nirixa fills that gap. It's a thin SDK layer that intercepts your LLM calls and tracks:

• Token cost per feature/endpoint/user

• Prompt stability over time (semantic diff engine)

• Hallucination risk score per request

• Cross-provider latency benchmarks

We're live now. Drop your questions below — especially if you're skeptical. Those are the conversations I learn the most from. 🙏

Big update — Nirixa now has a free forever plan. 🎉

If you've been on the fence since our launch, this is your moment.

Free forever includes:

→ 50K tokens/month tracked

→ Cost breakdown by feature & endpoint

→ 1 project, no card, no expiry

pip install nirixa and you're live in 5 minutes.

Every team building with LLMs deserves visibility into what's actually happening under the hood — not just when they can afford it.

Try it today → nirixa.in 🙏