Launching today

Leo: The Prompt Engineering SDK

Optimize, benchmark & evaluate LLM prompts with 1 command.

2 followers

Optimize, benchmark & evaluate LLM prompts with 1 command.

2 followers

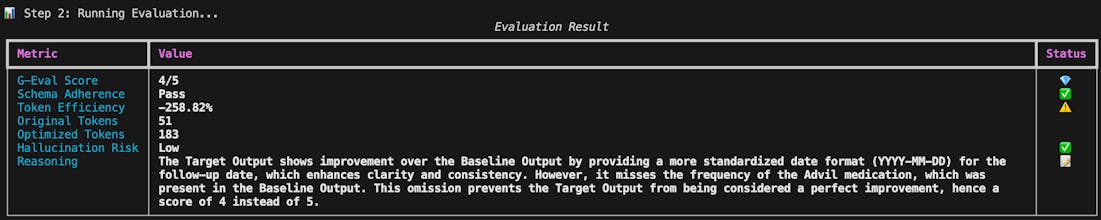

Bring rigor to your AI agents. Trusted by 8,500+ developers, Leo is a lightweight Python SDK designed to integrate prompt optimization directly into your CI/CD pipelines or internal tools. Stop shipping prompts that only work "most of the time." Leo provides a structured way to optimize drafts into role-based instructions and automatically evaluates them against real-world test cases using G-Eval and Hallucination Accuracy metrics. It's the missing piece of the LLM DevStack.

Been using Leo Prompt Optimizer for my thesis and honestly it made things way less frustrating.

Before, I was just tweaking prompts over and over and hoping they’d work. Now it feels a bit more structured and predictable. The evaluation part is especially useful, you can actually see if a change made things better or not. Also really like that it’s not tied to one model. I’ve tried stuff across different APIs without having to rethink everything. Overall, it just saves time and removes a lot of guesswork.