CoChat

Openclaw for Teams that is secure, collaborative, autonomous

352 followers

Openclaw for Teams that is secure, collaborative, autonomous

352 followers

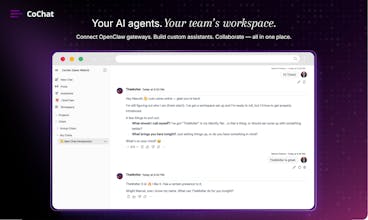

CoChat is where your team and AI agents work together. It’s the most secure way to use OpenClaw with a company: connect self-hosted or CoChat-managed gateways and share agents without sharing your machine (no SSH). Every connection is auto security-audited, with logs and approvals for sensitive steps. Agents have personality, memory, and scheduled tasks. The thing that makes it click: one thread where humans and agents bring different strengths and produce better output together.

Free Options

Launch Team / Built With

CoChat

Hey PH 👋 I'm Marcel, founder of CoChat.

The short version: We built a workspace where AI agents (openclaw and others) work alongside your team — not as isolated chatbots, but as teammates with memory, personality, and real responsibilities.

Why we built CoChat:

I’ve been running OpenClaw gateways for a while. Powerful stuff. But every time I tried to bring my team into that world — to share context, keep knowledge persistent, coordinate work, and move projects forward together — things broke down.

Agents lived in silos. Context got lost. Progress stalled.

There wasn’t a real place for teams to collaborate with AI.

So we built one.

Here's what CoChat does today:

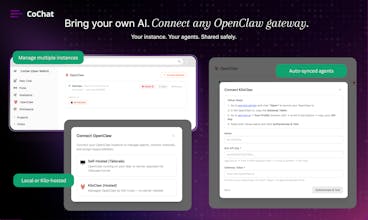

🔌 Connect your OpenClaw gateways — bring any agents you've already built. They show up alongside native CoChat assistants. One workspace, multiple sources.

🛡️ Every gateway gets audited automatically — Every gateway gets audited automatically — our open-source security scanner (Carapace) runs 225+ CVE checks and 24 audit rules on connect. You see the score. You see the findings. No black boxes — just full visibility before agents ever touch your workflow.

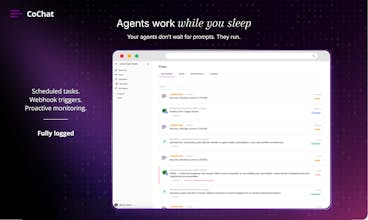

🧠 Agents with real depth — each assistant has a distinct personality, its own memory that grows over time, and scheduled responsibilities (cron, webhooks, intervals). They do actual work: monitoring, reporting, and research on autopilot.

👥 Collaborative conversations — invite teammates and agents into the same chat. Your marketing lead, your security agent, and your data analyst (human or AI) in one thread. Each agent keeps its voice — projects move forward because roles stay clear and context isn’t lost.

What you can try right now:

Spin up a free workspace at cochat.ai

Connect an OpenClaw gateway (or start with native assistants)

Invite your team and run a real collaborative thread

Pricing:

Free credits on signup. No subscription — just pay for what you use.

🎁 PH-only: Double credits on your first purchase.

Two things I’d genuinely love feedback on:

When connecting AI agents to a team workspace, what does “secure” need to mean for you to trust it?

Would you use scheduled agent tasks (e.g., “run this research every Monday at 8am”)? If yes, for what?

I’ll be here all day. Happy to answer questions, walk through a setup, or debate whether AI agents should have personalities. (They should.)

- Marcel

Told

The 'one thread where humans and agents collaborate' framing is the right bet — most team AI tools fail because they treat AI as a separate workflow instead of an integrated participant, so people revert to old habits. Curious how you handle the trust layer when a new team member joins: do they inherit existing agent memory and approvals, or start fresh? The security audit on every connection is a smart default that removes the 'who approved this?' conversation that kills adoption in ops-heavy teams. The scheduled tasks plus memory combo is where I'd expect the stickiest use cases to emerge — that's the shift from 'tool you use' to 'system that works for you.'

CoChat

@jscanzi Spot on with the "integrated participant" observation, that's exactly the bet.

On the trust layer for new team members: they get access to shared conversations and project context immediately, but tool approvals and agent permissions are scoped by role. A new hire sees what the agents have done. They don't automatically get the keys to reconfigure them.

So the agent's memory and conversation history is shared. The ability to change what tools an agent can access or modify its scheduled tasks is gated. Your ops lead and your new junior hire see the same workspace but have different controls.

It's the same logic as giving someone read access to a repo before they get merge rights. Context is shared. Control is earned. And agreed on scheduled tasks plus memory, that's where it clicks. An agent that remembers what it did last Tuesday and runs again next Tuesday without being asked is a fundamentally different thing than a chatbot.

Told

@marcelfolaron thanks for your answer!

Saw CoChat's PH launch and 249 upvotes is solid validation for collaborative AI tooling.. What caught my attention: you're leading with 'autonomous' + 'secure' which typically conflict in enterprise buying committees I work with..

Quick question: how are you positioning the autonomous capabilities to InfoSec teams who usually flag agent-based tools as data exfiltration risks?

From a marketing lens, I'm seeing B2B AI tools struggle with attribution when selling 'collaboration' as the buying committee is fragmented and CAC spikes without clear product led funnels. If you're exploring paid acquisition for MENA markets (where data residency is make or break), I'd be interested in exchanging notes on how AI collaboration tools are approaching regional compliance in ad messaging.

Congrats on the launch.

CoChat

@ielrefaae The short answer: we don't position autonomous and secure as tradeoffs. They're the same system.

Every agent action goes through a scoped MCP proxy, each automation run gets its own JWT with specific tool permissions. An agent that can post to Slack can't read from GitHub unless you explicitly grant it. Sandboxed execution with no host access and ephemeral VMs for browser tasks.

There is a full audit trail on every tool call. So when an InfoSec team asks "what can this agent access?" the answer is specific and auditable, not "well, it depends." That's usually what unblocks the conversation, the blast radius is contained by architecture, not by policy.

On the data exfiltration concern specifically: agents can't reach tools they're not scoped for, storage is tenant-isolated and encrypted, and every action is logged and searchable. Most security teams we talk to are more comfortable once they see the proxy layer — it's closer to how they already think about API access control.

Appreciate the kind words on the launch.

Oh this is cool — I love that agents here actually have memory and personality instead of being "ask and forget" chatbots.

Since you asked for feedback:

1. On security — honestly, the biggest thing for me is visibility. I want to see exactly what each agent has access to and what it did. Audit logs are a must. I build iOS apps and I route all my AI API calls through a proxy just to keep keys off the device, so I really appreciate that you're thinking about this at the infra level. The 225+ CVE auto-scan is a nice touch.

2. Scheduled tasks — 100% yes. Off the top of my head: weekly monitoring of competitor reviews on the App Store, auto-summarizing GitHub commits into changelogs, daily crash report digests. Basically anything that's "go check this thing regularly and yell at me if something's off."

Also the no-SSH approach is such a relief. Setting up tunnels to self-host AI tools has always been the part where I lose motivation lol. Great work on this!

CoChat

@sparkuu Thank you for your feedback!

On visibility: that's exactly how it works. Every tool call, every agent action shows up in your Activity feed, what it accessed, when, and what it did with it. Searchable and filterable. If you're already proxying API keys to keep them off-device, you'll feel right at home with how we scope tool access.

On your scheduled task ideas, all three work today:

Competitor App Store monitoring: set up an agent with web search access on a weekly cron, have it pull and summarize reviews

GitHub commit changelogs: connect your repo, schedule a weekly digest, agent summarizes what shipped

Crash report digests: connect your monitoring tool, daily cron, agent flags what matters

That's the "hire an employee, give them a job" model. You define who the agent is, what tools it gets, and when it runs. It handles the rest and reports back.

And yeah, no SSH was a deliberate choice. CoChat Bridge handles the connection. Install it, it works.

We lost motivation on tunnel setups too.

Thanks for trying it out.

OpenOwl

The "agents as teammates" framing really resonates. We've been dealing with the exact same problem on our team where everyone has their own AI setup but there's zero shared context between them. The gateway security audit feature caught my eye too, how granular are the permission controls per agent?

CoChat

@mihir_kanzariya Thank you. On the CoChat side each agent has custom access to a set of tools you define. You can override the tool selection for each responsibility separately. For openclaw instances tools are managed as part of the OpenClaw configuration, additionally we have a tool firewall that checks for dangerous tool calls in OpenClaw

CoChat

Hey@wintongee

appreciate you reaching out. To clarify: CoChat is a collaborative AI workspace for teams and AI Agents (OpenClaw). We haven't pivoted to "LinkedIn for AI" or anything resembling that description, so I think there may be a misunderstanding about what we're building.

Similar names happen "co" + "chat" is a pretty natural combination. And a chat demo on the landing page of a chat product is standard practice especially for AI applications, not something either of us invented.

Happy to to talk it through: hello@cochat.ai.

But the accusations of copying don't line up with what we're actually doing.

Copperlane

Cool concept! How do you think about managing agent permissions / preventing agents from taking risky actions?

CoChat

@brianna_lin great question. For OpenClaw Agents we built a “tool firewall” that checks for dangerous tool calls and will notify ask you before executing. The patterns for dangerous tool calls can be configured and will be iterated on.