Launching today

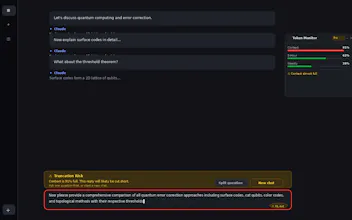

Token Monitor: AI Context Tracker

See how much Claude you have left before you hit the wall

3 followers

See how much Claude you have left before you hit the wall

3 followers

Real-time context & quota monitor for Claude.ai — see usage live and get smart alerts before your replies are cut off. Token Monitor gives Claude.ai users real-time, at-a-glance visibility into every dimension of their AI usage — so you never get blindsided by a cut-off reply or an unexpected quota limit.

Yeet

The story

Here's a scenario every heavy Claude user knows:

You're 40 messages into a complex coding session. You've uploaded files. You've got tools enabled. The conversation is cooking. Then the responses start getting weird — shorter, vaguer, slightly off. A few turns later you're rate-limited.

What happened? You ran out of context. You ran out of quota. You had no idea either was coming.

I built Token Monitor because I kept hitting this wall and wanted to see the dashboard that should have been there all along.

What Token Monitor shows you

Your quota, in real time. 👌

Claude doesn't run on simple daily limits — it uses rolling 5-hour windows and (for Max plans) weekly budgets. Token Monitor reads your actual consumption and tells you what's left, and exactly when the next slot unlocks. No more refreshing /settings/usage hoping something changed.

Your context, broken down.

Every message you send carries your full conversation history as baggage. Token Monitor scans the active chat live and shows a breakdown: conversation turns, uploaded attachments, enabled tools and connectors, project knowledge docs. You see the real cost of what's loaded, not just a vague message count.

A warning before truncation — while you're still typing.

Before you press Enter, Token Monitor estimates whether your prompt + expected response will overflow the context window. If it will, you get flagged in the composer. It even detects multi-question prompts and suggests splitting them — one of the sneakiest causes of half-answers.

Output size prediction.

"What is X?" and "Build me a full implementation of Y" consume wildly different tokens. Token Monitor reads your draft and predicts the output bucket (S / M / L / XL) so you know what you're about to spend before committing.

Stream state awareness.

The moment you press Enter, your input tokens are locked in — even if you stop the response. Token Monitor shows you live: tokens committed, tokens streamed back, current state. No surprises on partial stops.

Who it's for

Claude Pro / Max subscribers who want to squeeze more value out of their plan

Power users running long research sessions, coding projects, or document workflows

Teams where hitting limits mid-task has real productivity cost

Anyone who has ever been surprised by a truncated response or an unexpected rate limit

How it works

Token Monitor is a Chrome extension. It reads the same usage page you can visit yourself at /settings/usage, and scans the current chat DOM — all locally, in your browser. Your conversations are never sent anywhere. No account, no backend, no analytics.

What's next

Claude support is live and full-featured. ChatGPT and Gemini modules are in progress. Usage history and charts are on the roadmap.

Try it

Install Token Monitor on the Chrome Web Store → https://chromewebstore.google.com/detail/token-monitor-%E2%80%94-ai-contex/nhdncpkcgffiekmegejdljkfbkelhkoa

Free to install. No account needed.

Happy to answer questions below — especially from anyone who's hit the "why did my context just vanish" moment. That's exactly why this exists