Meta-Reasoning

Your LLM doesn't think. We make sure of it.

4 followers

Your LLM doesn't think. We make sure of it.

4 followers

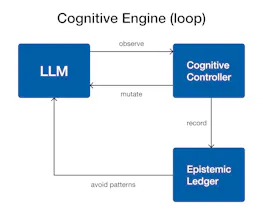

Meta-Reasoning is an SDK for governing how LLMs reason. Instead of treating models as agents, it externalizes cognition into a controllable architecture: observe reasoning structure, mutate it with constraints, and record trajectories in an epistemic ledger. Enable debugging, replay, benchmarking, and CI/CD for reasoning. Make LLM behavior testable, deterministic, and governed. Already available on 🦞OpenClaw, 🟠ClaudeCode and 🤖Codex!