CodeRay

Stop token burn. Agents read lines, not file dumps.

2 followers

Stop token burn. Agents read lines, not file dumps.

2 followers

AI agents burn tokens reading file dumps when a small snippet would do. Context fills up, they lose track, re-explore, read more. Round and round. CodeRay gives agents exact locations in your codebase instead of full files. They read only what they need, using ~70% fewer tokens on average. Three tools shipped over CLI and MCP: search (natural language), skeleton (structure and docs only), impact (what breaks before you change something). Runs fully local – your code never leaves your machine.

Hi everyone,

I built this after hitting token limits way more than expected on various subscription plans across different vendors.

The pattern was always the same: agent reads a whole file, it floods the context, it loses track, re-explores, reads more files. Round and round until the session dies.

CodeRay gives agents coordinates instead of content – file paths + line ranges. They locate first, then read only the lines that matter.

Most agents and coding tools already support reading files by line range but they just never get the chance to use it. CodeRay is the missing piece: it tells them where to look, so they read a 20-line slice instead of dumping a 2,000-line file into context. As a result, I got up to 70% token reduction consistently.

Works as a CLI or MCP stdio server – so agents can call it directly without leaving their workflow. Also ships with AGENTS.md and SKILLS.md: drop them in your repo and agents pick up the locate-first pattern automatically, no extra prompting needed.

Python (most tested), JS/TS (lighter tested) supported today, more coming.

No API key, no LLM, no network. Everything stays local – one gitignore-able artifact.

Additional features:

Multi-repo / monorepo – index multiple roots or just a subtree (sub-modules/files)

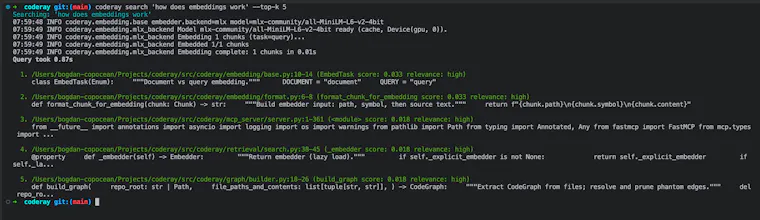

Hybrid search – combines semantic and keyword search under the hood for better results (vector + BM25 & RRF, with optional boosting)

Embedder runs fully on-device – CPU via fastembed, or faster on Apple Silicon via MLX

Live re-indexing – watches for file changes and updates incrementally; git-aware so it skips what it shouldn't touch; .coderay.toml controls what gets indexed.

I'd love feedback on the tools themselves!