Pipevals

Evaluation pipelines for every LLM application

4 followers

Evaluation pipelines for every LLM application

4 followers

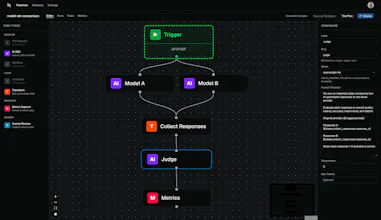

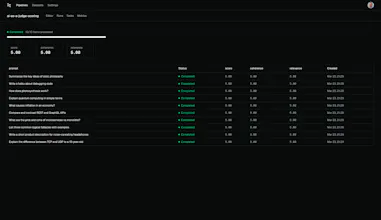

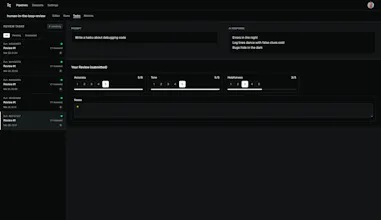

Evaluating LLM output by eyeballing it works... until it doesn’t. Pipevals is an open-source pipeline builder for AI evaluation. Trigger it with a single HTTP POST from your existing code, piping data through AI judges, scoring, and human review. Every run executes durably, with step-by-step results. Dashboards automatically track trends, distributions, and pass rates. Compare models, test prompts, and catch regressions. Self-hosted. MIT-licensed.

Pushline

Hey PH, Pipevals is still early, but I’d love to hear your thoughts!