Precia

Find the best LLM for your task. Save 2-10x.

3 followers

Find the best LLM for your task. Save 2-10x.

3 followers

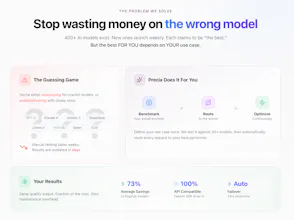

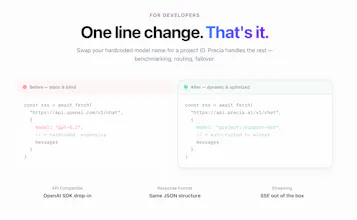

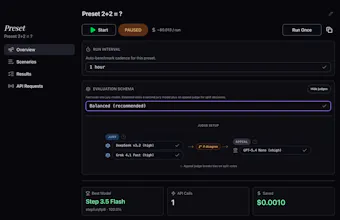

Stop hardcoding AI models. Precia benchmarks a curated set of LLMs on your specific scenarios, scores them with AI judges, and routes API traffic to the current winner automatically. Find the best LLM for your task and cut costs 2-10x. OpenAI-compatible API, built-in fallback, and continuous re-benchmarking to catch silent drift. v0.1 alpha. BYOK via OpenRouter. Tell us what’s missing.

Hey Product Hunt, I’m Nikita, maker of Precia.

I built Precia because “which model should we use?” kept turning into guesswork. Generic benchmarks rarely tell you which model is best for your actual support bot, summarizer, or extraction pipeline, and the answer changes as models launch and drift.

Benchmark LLMs on your own scenarios, not generic benchmarks.

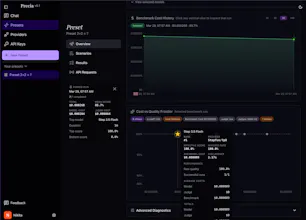

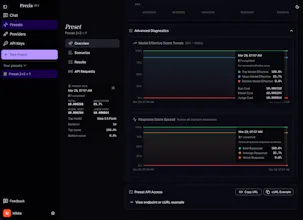

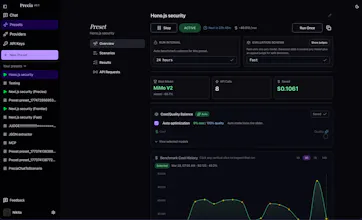

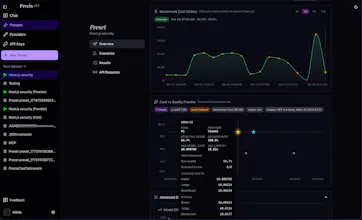

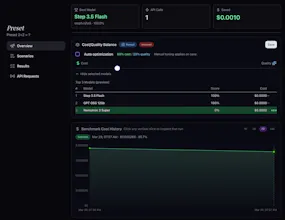

Precia scores responses against your rubric, auto finds the best quality/cost fit, and routes production traffic to the current winner through an OpenAI-compatible API.

What’s live in alpha:

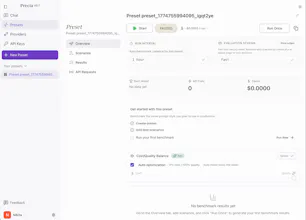

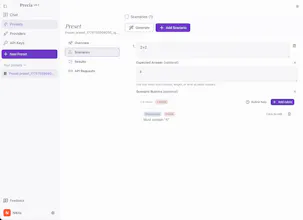

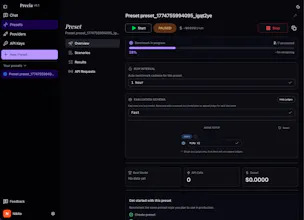

- Create presets with custom scenarios and scoring rules

- Generate draft scenarios from a plain-English description or existing chat history

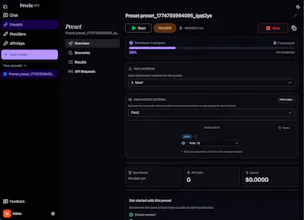

- Benchmark a curated set of models for each use case, then return a ranked top 3 with automatic fallback when the current winner degrades

- Re-run benchmarks to catch drift and keep routing current

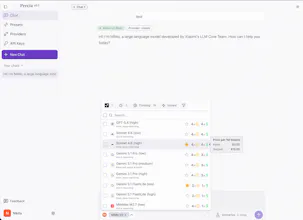

- Side-by-side chat to compare models directly, or test prompts against your saved presets

- Chat history is stored locally in your browser (IndexedDB)

Still early:

- Team features

- More advanced routing controls

- Shadow routing

Pricing today: Precia is free in alpha. You bring your own OpenRouter key.

Built on Cloudflare Workers at the edge, so the routing layer stays fast globally.

I’m launching to learn whether this is a real problem for teams already shipping AI features.

If you run LLMs in production, what would you need to trust automatic model routing?