QuarterBit AXIOM

Train 70B AI models on 1 GPU instead of 11

2 followers

Train 70B AI models on 1 GPU instead of 11

2 followers

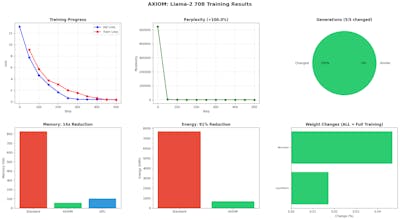

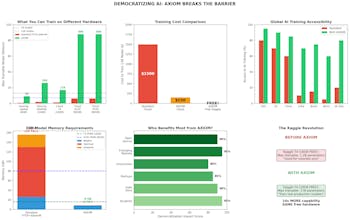

QuarterBit AXIOM makes large AI model training accessible to everyone. THE PROBLEM: Training a 70B parameter model needs 840GB of GPU memory — that's 11 A100 GPUs at $30+/hour. Only big tech can afford this. THE SOLUTION: AXIOM compresses training memory 15x, allowing: • 70B models on 1 GPU (was 11) • 13B models FREE on Kaggle T4 • 90% cost reduction • 91% energy reduction NOT LoRA OR ADAPTERS: 100% of parameters are trainable. Full fine-tuning, not parameter-efficient tricks.