Launching today

CoPaw

Self-hosted AI assistant with memory that sticks

2 followers

Self-hosted AI assistant with memory that sticks

2 followers

Self-hosted AI assistant with persistent memory, local model support (Ollama, llama.cpp, MLX), modular skills, and native integrations for Discord, iMessage, Feishu, and DingTalk. Open source.

Here's the problem nobody talks about: AI assistants don't accumulate.

You use them every day. They stay dumb about you. No memory of your preferences. No awareness of ongoing work. You do all the re-explaining. Every. Single. Time.

That's not a UX problem. It's an architecture problem. These tools were built to respond, not to remember.

CoPaw is Alibaba's open-source answer to that. Self-hosted personal AI agent. Persistent memory. Local models. Modular skills. And it lives inside the channels you already use: Discord, iMessage, Feishu, DingTalk.

Three things that stood out:

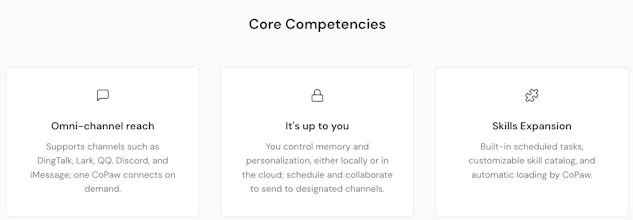

Memory that sticks. Local vector search, no database setup, fully cross-platform. Your preferences and tasks persist across sessions. No more "as I mentioned earlier..."

Your models, your hardware. Ollama, llama.cpp, MLX, private endpoints. No forced cloud dependency. No data leaving your machine unless you want it to.

Extensible by default. Skills hub, MCP hot-swapping, cron-based agentic workflows. It compounds. It grows as you do.

It's v0.0.3. You're early. But that's the point.

The roadmap item I'm personally watching most closely: the large-small model collaboration mode. Local models handle sensitive data. Cloud models handle heavy reasoning. That's not just a performance optimization. It's the only privacy architecture that actually makes sense for an agent that knows everything about you.

The founders are here today. If you're building on top of CoPaw or have a use case you'd want prioritized, drop it in the comments.

One question for the community: what's the one thing your current AI assistant keeps forgetting about you that you're tired of re-explaining?